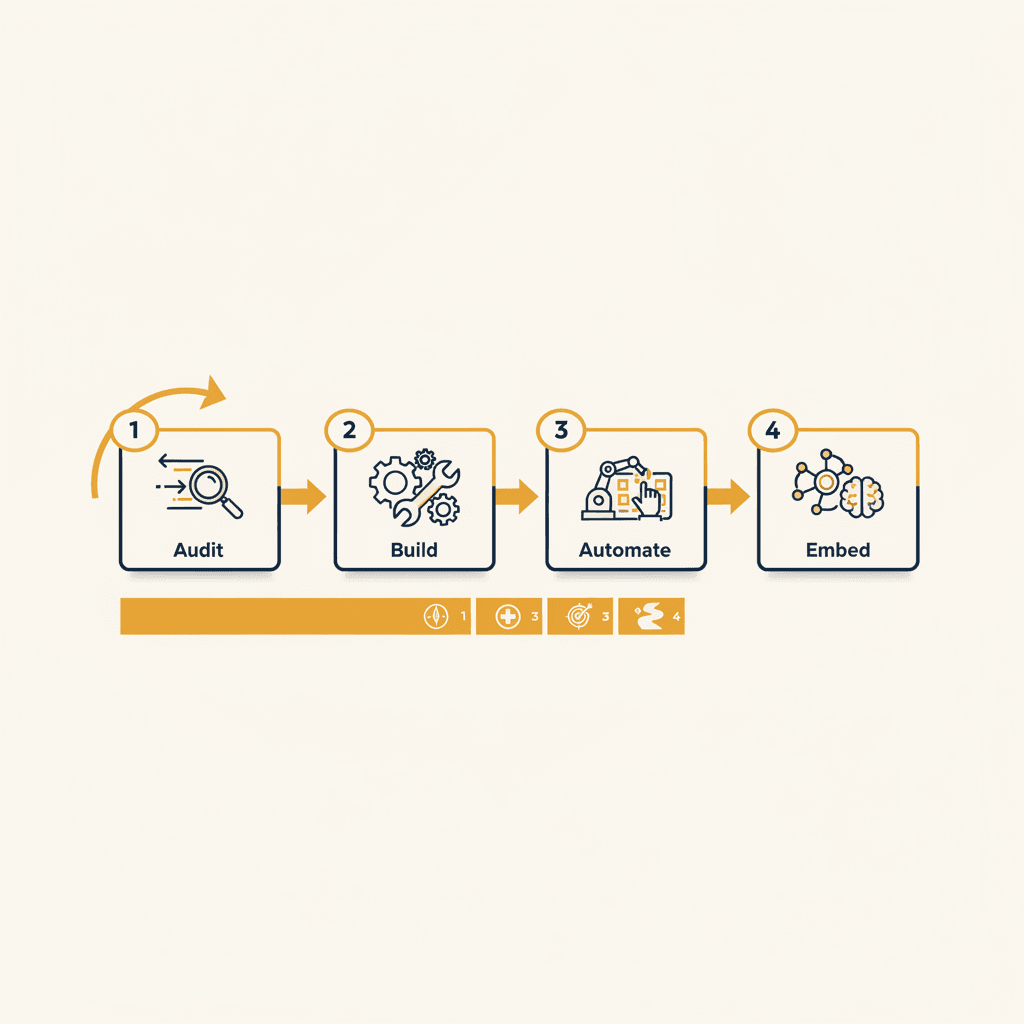

Audit · Build · Automate · Embed: How We Actually Run AI Engagements

Most AI agencies sell you one of these four stages and call it a project. We run them as one continuous engagement because that's what actually works for established firms.

Most AI agencies sell you one of these four stages and call it a project. We've found that running them as one continuous engagement is what actually works for established firms.

The framework came from working with three completely different operations: a global market research firm with 5 country offices and 40+ years of operating history, a boutique entertainment law firm handling mid-six-figure contracts, and a NYC real estate operation drowning in lead volume. Three different industries, three different problem shapes, same engagement structure.

The Audit Stage: Diagnostic Before Code

Most engagements start here. Most should.

Established firms have data, complexity, and trust they've built over decades. They don't need a six-week demo. They need someone to map the customer-facing workflows, inventory the tools and data, identify the AI capability gaps, and produce a 90-day prioritized roadmap that's honest about what's possible versus what's hype.

The audit covers four areas: workflow mapping, data audit, AI capability gap analysis, and customer-facing process review. We document every tool in the stack. Map how data flows between systems. Identify where manual tasks eat time and where AI can create real leverage.

The audit is what lets us scope Build properly. It's also what lets a client say no to half the things they thought they wanted.

For the market research firm, the audit revealed that 60% of their analyst time was spent reformatting client deliverables across different templates. For the law firm, it was contract review prep work that took 3 hours per deal. For the real estate operation, it was lead qualification calls that happened during off hours when nobody was available.

The audit produces a prioritized roadmap. Not everything gets built. Not everything should.

The Build Stage: Custom Apps That Actually Work

Build is the custom application work. Sometimes that's a system built from scratch. Often it's rapid deployment of our pre-built systems — content engines, BDR agents, proposal automation, meeting tools — branded and integrated into the client's stack.

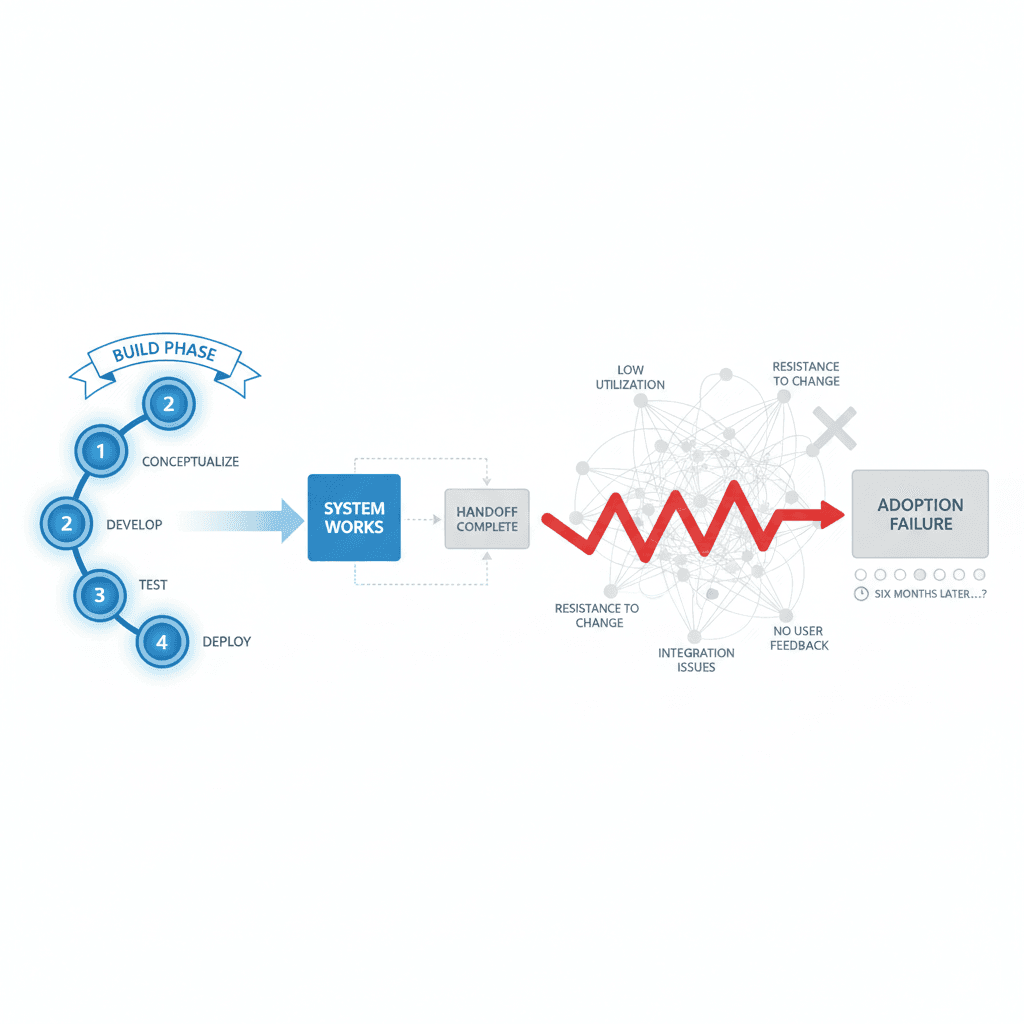

The Build phase is the most visible part of the engagement. It's also not where the value lives.

For the market research firm, we built a document transformation pipeline that takes raw research outputs and generates client-ready reports in multiple formats. For the law firm, we created a contract analysis tool that flags key terms and generates risk assessments. For the real estate operation, we deployed a lead qualification agent that handles initial screening calls 24/7.

Each system gets enterprise-level security. Each system can be self-hosted or cloud-deployed based on compliance requirements. Each system integrates with existing tools rather than replacing them.

Build success is measured by one metric: does the system work in production? Not in a demo, not in a sandbox, but in the actual workflow with real data and real users.

The Automate Stage: Workflow Connection

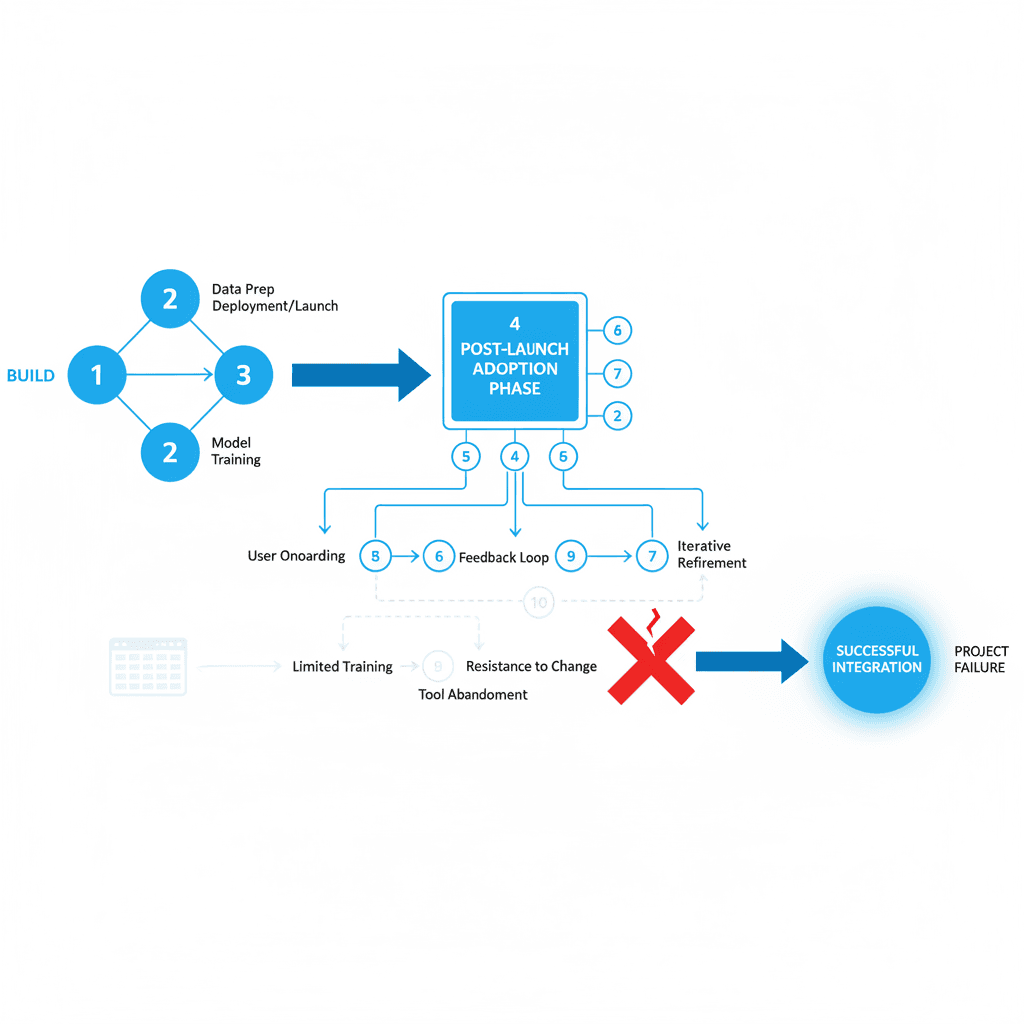

Automate is where most projects fail invisibly. The AI works, but it lives in a tab nobody opens.

This stage connects the tools, the data, and the team. RevOps integration. GTM workflow automation. Client intake and onboarding pipelines. The plumbing that makes AI feel like part of the operation instead of something bolted on the side.

For the market research firm, automation meant connecting the document pipeline to their project management system. New research gets processed automatically when uploaded. Clients get notifications when deliverables are ready. Analysts see completed work in their existing dashboard.

For the law firm, automation connected contract analysis to their billing system. Every contract review generates time entries. Risk flags create calendar reminders. Client communications happen through existing email templates.

For the real estate operation, automation meant lead data flowing from qualification calls directly into their CRM. Qualified leads get assigned to agents automatically. Follow-up sequences trigger based on conversation outcomes. No manual data entry.

The goal isn't to replace existing workflows. The goal is to make AI feel like it belongs there.

The Embed Stage: Making It Stick

Embed is the part that makes the rest worth it. It's also the part most firms underprice.

Team training happens here. Not one-time sessions, but ongoing education as people discover edge cases and new use patterns. Documentation gets written — the kind that actually gets used. Adoption metrics get tracked. Systems get refined based on real usage patterns.

We don't disappear at launch. We stay close until usage becomes natural.

AI projects fail at adoption, not at build. Treat the engagement that way and the project actually lands.

For the market research firm, Embed meant training 12 analysts across 5 countries on the new document pipeline. Creating video tutorials. Setting up Slack channels for questions. Tracking usage weekly and adjusting the system based on feedback.

For the law firm, it meant training partners and associates on contract analysis workflows. Creating approval processes for AI-generated risk assessments. Building confidence in the system through gradual rollout.

For the real estate operation, it meant training agents on lead handoff processes. Creating dashboards that show qualification outcomes. Refining the agent's conversation flows based on real interactions.

Success metrics shift from "does it work?" to "are people using it?" Usage drives iteration. Iteration drives adoption. Adoption drives results.

Why the Framework Works

You can enter at any stage. Most clients start with Audit and grow from there. Some come to us with clear build requirements. Others need automation work on existing AI systems.

The methodology comes from a single belief: AI projects fail at adoption, not at build. The technology works. The integration is possible. The team doesn't change their behavior.

Running all four stages as one engagement solves this. Audit ensures we build the right thing. Build creates something that actually works. Automate makes it feel native. Embed makes it stick.

Three different industries, same framework, consistent results. The engagement structure scales across problem types because it's built around human adoption, not technical capability.

Key Questions

Q: How long does the complete Audit · Build · Automate · Embed process take?

A: Typically 90-120 days for the full engagement, though timeline depends on system complexity and team size. Audit takes 2-3 weeks, Build 4-6 weeks, Automate 2-4 weeks, and Embed is ongoing with intensive support for the first month post-launch.

Q: Can we start with Build if we already know what AI system we want?

A: Yes, but we recommend at least a condensed audit phase. Most "clear requirements" change significantly once we map existing workflows and data flows. The audit often reveals simpler solutions or catches integration issues early.

Q: What's the difference between this and typical AI consulting?

A: Most AI consulting focuses on strategy or builds demos. We focus on production systems that teams actually use. The Embed stage — ongoing support until adoption is real — is where most consulting engagements end but where we see the actual value creation happen.

Q: How do you measure success in the Embed stage?

A: Usage metrics, not capability metrics. How many team members are using the system weekly? How has it changed their daily workflows? What manual tasks have been eliminated? Success means the AI feels invisible because it's become part of normal operations.

Q: What happens if the team doesn't adopt the AI system during Embed?

A: We treat low adoption as a system design problem, not a training problem. We iterate the interface, adjust workflows, or sometimes rebuild components based on user feedback. The engagement doesn't end until the system is being used consistently.

About the author

The stages

- 1

Audit

The diagnostic. Map where AI lives in your stack, what's working, where the leverage is — before anyone writes a line of code. Workflow + data audit. AI capability gap analysis. Customer-facing process review. 90-day prioritized roadmap.

- 2

Build

Custom AI applications, built properly. Or rapid spin-up of pre-built systems (content engine, newsletter system, BDR agent, meeting tool, proposal automation). Enterprise-secure deployment. Self-hosted or cloud.

- 3

Automate

Operations that scale without scaling headcount. RevOps, GTM workflow automation, client intake and onboarding pipelines, content engines, lead-gen. Connect the tools, the data, and the team.

- 4

Embed

The part that makes the rest worth it. Team training. Documentation. Adoption metrics. Iterative refinement. We stay close until usage is real.

Related

Keep reading

Want more like this?

Subscribe to Field Notes — weekly observations from inside real AI engagements. Free.