Why Most AI Projects Fail at Adoption, Not at Build

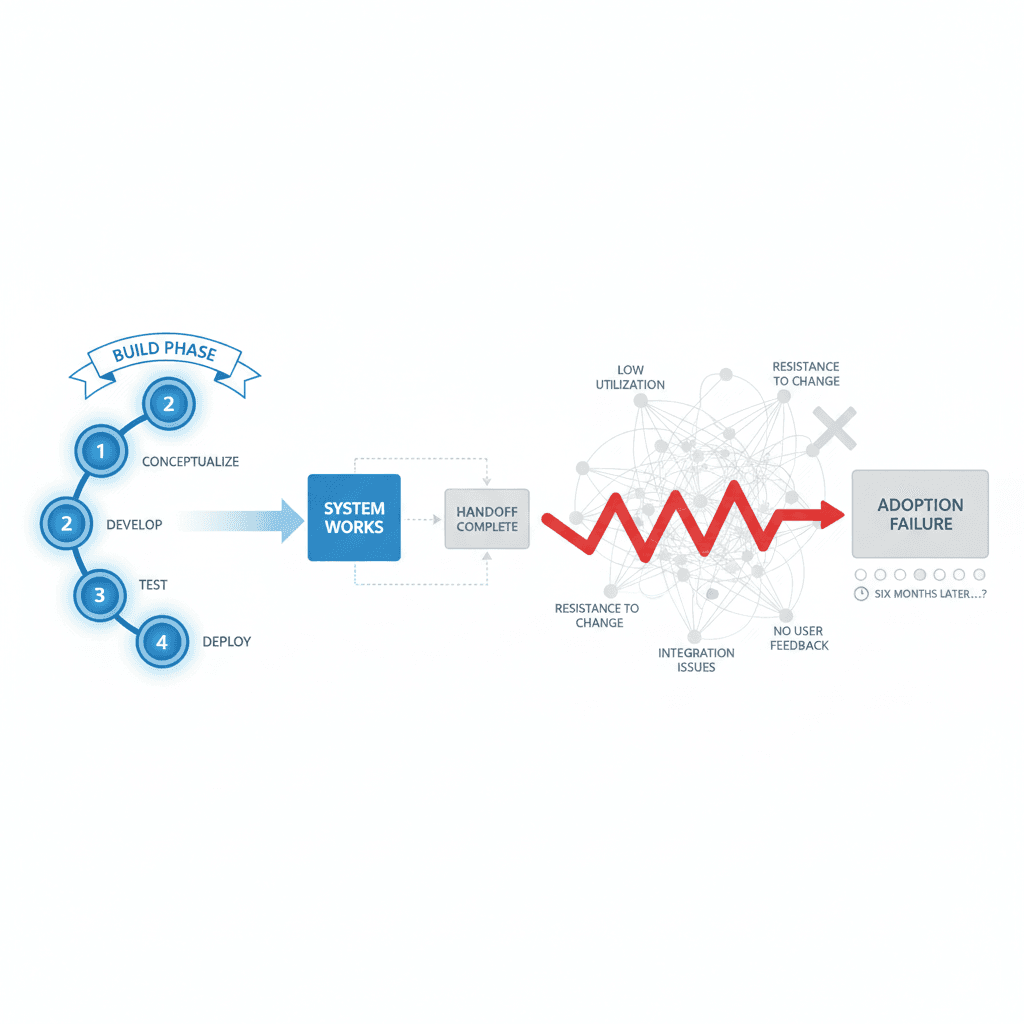

Most AI agencies ship and leave. Six months later, half the team has gone back to the spreadsheet. The real work happens after launch — and that's where most projects die.

The system works perfectly. The demo wows the partners. The AI tool processes documents faster than anyone imagined. Six months later, half the team has quietly returned to the old spreadsheet.

This is the pattern we see across every industry we work in — market research, law, real estate, professional services. The build phase finishes on time and on budget. The technical requirements are met. The handover presentation is flawless. And then it dies in the organization.

Most AI agencies don't price this reality into their engagements. They ship and leave. The contract defines deliverables as working software, not working teams. The slides look great. The system passes user acceptance testing. But adoption is treated as someone else's problem.

The technical implementation is roughly 30% of the work. Cultural adoption is the other 70%.

The Adoption Death Spiral

We've watched this play out at SIS International, at the boutique entertainment law firm we work with, at the NYC real estate firm. Three different industries. Same pattern.

Week one post-launch: Everyone uses the new AI tool. It's new, it's fast, leadership is watching.

Week four: The early adopters are still using it. The skeptics have found reasons why their specific use case doesn't fit.

Week twelve: Only the champions and the people who built it are still using it regularly.

Week twenty-four: The old processes have quietly returned. The AI tool becomes the expensive solution that "didn't work for our team."

The technology didn't fail. The adoption strategy did.

Why Build-and-Leave Fails

Implementing AI is not different from implementing any other piece of enterprise technology. It requires technical implementation, yes. But it requires at least the same amount of work on cultural adoption, team buy-in, and the willingness to actually use the thing day-to-day.

The traditional AI agency model treats adoption like a training session. Two hours of demos. A user manual. Maybe a Slack channel for questions. Then the team is expected to transform their daily workflows overnight.

People don't work that way. Organizations don't work that way.

Real adoption requires understanding why someone reaches for Excel instead of the new AI tool. It means identifying the micro-moments where old habits kick in. It means building new muscle memory through repetition and support.

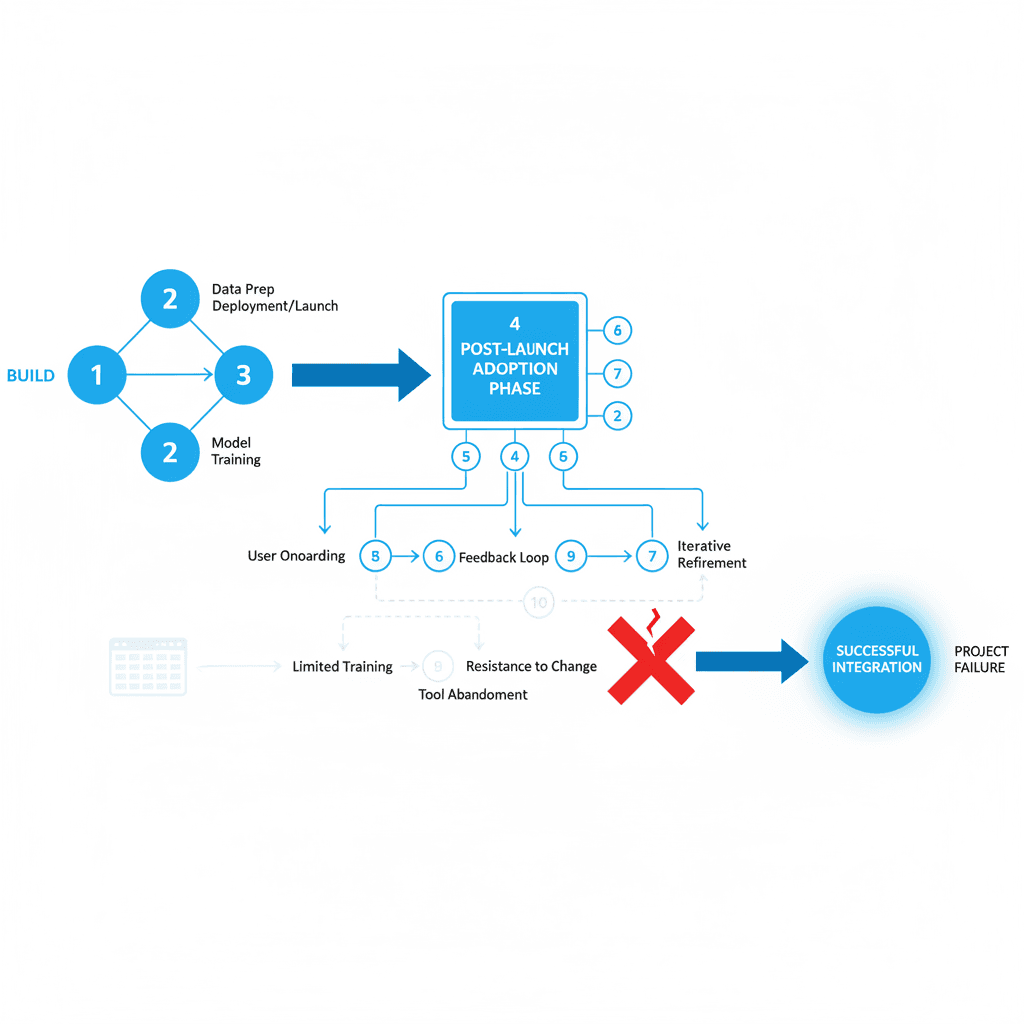

Embed: The Fourth Stage

This is why Embed exists as the fourth stage of how we engage with teams. After Audit, Build, and Deploy comes the part that makes the rest worth it.

Embed isn't maintenance. It's active adoption management. We stay close for the critical months when new tools either become habits or get abandoned.

Team training that goes beyond feature walkthroughs. We run working sessions where people use the AI tool on their actual work, not demo data. We identify the workflow friction points that make people revert to old methods.

Documentation that actually gets used. Most AI documentation explains what the system does. We document when and why to use it. Situational guidance that maps to real daily decisions.

Adoption metrics that matter. Not login counts or feature usage stats. We track behavioral change. Are people actually changing how they work? Are the promised time savings materializing?

Iterative refinement based on real usage patterns. The first version of any AI implementation is exactly that — a first version. Teams discover edge cases, workflow conflicts, and improvement opportunities only after they start using the tool in production.

What Works: Three Principles

Start Small, Prove Value Fast: We don't try to transform entire departments overnight. We identify one high-impact use case, get that working smoothly, then expand. Success builds momentum.

Champions Over Mandates: Top-down adoption mandates create compliance, not enthusiasm. We identify early adopters who become internal advocates. They solve adoption challenges from inside the team.

Measure Behavior, Not Activity: Usage statistics lie. Someone logging into a system doesn't mean they're changing how they work. We track outcome metrics — time saved, tasks eliminated, quality improvements.

The Real Project Scope

If you're scoping AI for your organization and the agency you're talking to only discusses what they'll build, ask them what happens in month four. Ask how they measure adoption success. Ask what their process looks like when half the team stops using the tool.

The answer to those questions is the actual project.

The teams that succeed with AI are the ones that take it seriously as a change-management problem, not just a software-installation problem. They adapt. They learn. They experiment. They treat the first version as the start of the work, not the end.

Technology adoption is a human problem. The AI works. Getting humans to work with the AI — that's where the real expertise shows up.

Key Questions

Q: How long should we expect the adoption phase to take?

A: Plan for 3-6 months of active adoption management. Real behavior change takes time, and complex workflows need iterative refinement based on actual usage patterns.

Q: What's the difference between training and adoption management?

A: Training explains how to use the tool. Adoption management addresses why people don't use it, identifies workflow friction, and builds new habits through ongoing support.

Q: How do we measure adoption success?

A: Track outcome metrics, not activity metrics. Measure time saved, tasks eliminated, or quality improvements rather than login counts or feature usage statistics.

Q: What if our team resists the new AI tools?

A: Resistance usually signals workflow misalignment, not technology aversion. We identify friction points, adjust implementation, and work with champions to demonstrate value.

Q: Should we implement AI across the entire organization at once?

A: Start with one high-impact use case. Prove value, build momentum, then expand. Organization-wide rollouts often fail because they try to change too much too fast.

About the author

Related

Keep reading

Want more like this?

Subscribe to Field Notes — weekly observations from inside real AI engagements. Free.